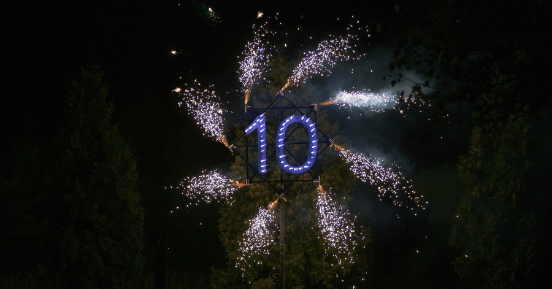

Ten Years of Zero Trust – From Least Privilege Access to Microsegmentation and Beyond

by Mendy Newman

Posted on September 22, 2020

Ten years ago, on September 14, 2010, John Kindervag of Forrester Research published “No More Chewy Centers: Introducing The Zero Trust Model Of Information Security,” his seminal article on the Zero Trust data security concept.

It was, at the time, a revolutionary way of viewing cybersecurity. More than simply another technique, Zero Trust provided a new paradigm – a completely different way to view data security.

Prior to this red-letter date, the prevailing model for data security was an M&M: a hard, crunchy outside with a soft chewy center. The hard, crunchy part keeps foreign threats out, while the soft inside is a trusted zone. A similar metaphor that is often bandied about in security circles is the castle-and-moat. The moat, along with the thick castle walls, keeps outsiders away, while the royals and treasures within stay safe and protected. Of course, there’s a drawbridge — but it’s heavily defended.

Internet access functions as the drawbridge to the data castle. Just as the drawbridge must be lowered to allow vital supplies to get in, no organization today can survive without the information that flows in and out via the web. Of course, data security teams invest serious effort and sizeable sums to ensure that no enemy agents are hidden in that essential stream.

But what happens if, despite all the defenses, someone succeeds in breaching your defenses? If a royal guard, wearing the king’s colors and carrying his flag, is simply an enemy agent in a trusted disguise? Once they are in, everything – royal jewels and the royals, themselves, are exposed and in danger. And what if that supposedly loyal footman is really disloyal, and lowers the bridge to allow marauders in?

The Fallacy of the “Trusted Insider”

Kindervag cited the story of Philip Cummings, a trusted employee of Teledata Communications (TCI), which provided software that enabled banks to obtain consumer credit information from credit history bureaus. As a help desk employee, Cummings was trusted with login credentials for systems of the bureaus TCI served.

It didn’t take much to convince Cummings to betray that trust: For a mere $60 apiece, Cummings broke into the bureaus and stole 30,000 credit reports for the criminals who paid him. For them, it was a good deal: Why hack your way in, when bribing a trusted employee is easier and much less likely to raise any red flags?

And, in fact, the red flags stayed down. The thefts were only discovered when one of the credit history bureau’s customers noticed a problem. After all, no untrusted outside ever got in.

The Original Zero Trust Concept

The philosophy behind Zero Trust is mostly the same today as it was ten years ago (more from us on this in future posts – stay tuned!) In Kindervag’s words:

There is a simple philosophy at the core of Zero Trust: Security professionals must stop trusting packets as if they were people. Instead, they must eliminate the idea of a trusted network (usually the internal network) and an untrusted network (external networks). In Zero Trust, all network traffic is untrusted. Thus, security professionals must verify and secure all resources, limit and strictly enforce access control, and inspect and log all network traffic.

The report points out the “trust but verify” model doesn’t work – too often, it’s all trust and very little verify. Kindervag identified two key weaknesses in the status quo:

- Insiders can’t implicitly be trusted, as the Cummings case shows.

- Packets aren’t people. Even if you could trust a person (you can’t), a data packet is not a person – you can’t know with certainty where it’s originating or who sent it. No packets should automatically be assumed to be trustworthy.

To address these issues, the paper proposed three principles that comprise the core of the Zero Trust approach:

- All resources must be accessed securely. All traffic, internal or external, is assumed to be threat traffic until it is authorized, inspected and secured.

- “Least Privilege” strategies should be applied and enforced through access control, allowing users to access only the data they need to do their jobs.

- Inspect and log all network traffic. Just logging isn’t enough – traffic must be verified in real time as legitimate as well.

Zero Trust Today

Zero Trust is a philosophy, a concept, a strategic approach. No comprehensive Zero Trust product is available for purchase. It’s an approach to take in everything that’s done and every decision that’s made regarding securing organizational systems.

If we were to issue a Zero Trust report card, how would it look, a decade later? Let’s look at each of the principles to see where we stand.

Accessing resources securely

To a significant extent, securing traffic translates today into authorizing users via passwords and verifying that authorization as legit through multifactor authentication. Beyond requiring users to demonstrate that they are entitled to access as resource, they also must prove that they are who they purport to be, as well.

Once users are authenticated, however, access is too often less than secure. So, while web browsers may require users to supplement usernames and passwords with fingerprint scans or codes from their phones, once data is flowing from a website or cloud app, it’s short work for cybercriminals to tap into the browser. Similarly, once a hacker, using stolen credentials or by exploiting some vulnerability in the perimeter, connects into a network, they can laterally roam and freely attempt to access resources.

Least privilege access controls

More organizations than ever are talking about the need to microsegment their networks to limit user access to only the resources they need. What’s done in practice, however, is very different. Most users connect in, authenticate, and are granted network level access. Sure, they need an ID and password to access an app, but their machines can connect to the login screen of any asset on the network. This is a huge vulnerability, which hackers or malicious insiders can easily exploit.

Why is enforcing true least privilege access for users such a challenge? Well, one significant reason is that the process of determining what resources each individual needs is detailed, tedious work, and subject to change as users’ responsibilities change and new resources come online. In large organizations, the sheer manpower requirements for implementation make even group-level “less privilege” controls a challenge and “least” virtually unheard of.

Real time traffic logging, inspection and verification

As is true for the other Zero Trust principles, significant strides have been made toward inspecting and verifying traffic in real time. The challenges here come from opposite sides: On the one hand, hackers excel at replicating legitimate sources and channels of traffic. And on the other, faced by frequent alerts triggered by legitimate users logging in from myriad personal devices and random locations, situation rooms lower sensitivity settings and miss actual security breaches.

The report card today? Good progress, but there’s lots more work to be done.

The Zero Trust Journey

Ten years in, it’s clear that to fully actualize the Zero Trust approach, network structures themselves need to change. And indeed, a host of new network structures and connectivity approaches – SDP, CASB, SASE and ZTNA – are eliminating the castle-and-moat secure perimeter paradigm and beyond that, replacing even the cobbled-together solutions that companies turned to achieve Zero Trust.

More than seven years after the Zero Trust concept was unveiled, in a report entitled Zero Trust eXtended (ZTX) Ecosystem, Forrester’s Chase Cunningham described how Zero Trust was evolving into a holistic applied security approach. He focused on powerful platforms that leverage software-controlled solutions to transform networks into microperimeters, selectively apply obfuscation techniques, and strictly control user access privileges. He stressed that Zero Trust solutions must be developed to address the entire digital ecosystem — users, devices, data, workloads and networks via automation and orchestration solutions – and importantly, be easy to integrate into holistic platforms, and easy to use.

Gartner has further developed the Zero Trust Security concept as well, especially in the context of enterprise digital transformation initiatives and the migration of applications and data to the cloud. As “The Future of Network Security is in the Cloud,” a 2019 Gartner report, states,

The legacy “data center as the center of the universe” network and network security architecture is obsolete and has become an inhibitor to the needs of digital business.

With ongoing migration to SaaS and cloud applications, enterprise networks are extending well beyond the physical enterprise, and into the public domain. Similarly, the COVID-19 epidemic has blurred – perhaps permanently broken – the boundaries between internal users and those connecting from outside.

Zero Trust in Transition

The old secure-perimeter model is poised to be replaced by the cloud delivered security of the “Secure Access Service Edge (SASE).” SASE relies on a Software Defined – Wide Area Network (SD-WAN) combined with network security functions such as Zero Trust Network Access (ZTNA) to provide users with low latency, high performance, and secure access to the resources they need from anywhere, no matter where those resources reside. Security inspection is moved to the sessions, without routing traffic and requests through a physical data center for inspection.

SASE will eventually be able to provide a truly comprehensive Zero Trust approach to data security, but that’s still few years away. As Gartner says, Zero Trust is a journey, not a destination. Removing implicit trust and reducing accumulated “risk debt” is a process that takes time.

Gartner estimates that it will be 2025 before at least one IaaS provider offers a comprehensive suite of SASE capabilities – but there is already a great deal of interest. In their 2019 report, they projected that 40% of enterprises will have strategies to implement SASE by that time. Pandemic-spurred digitization, however, is likely to shorten that timeline, as organizations seek more secure ways to manage both remote users and cloud-based solutions.

The Future of Zero Trust

Despite the new pressures of distributed workforces and vanishing perimeters, the promise of SASE and comprehensive Zero Trust platforms are still some time away. In the meantime, today’s organizations, most of which currently operate on hybrid cloud computing models, need to prioritize Zero Trust initiatives and start their journeys with trusted vendors who can deliver controls that have the greatest impact on improving security outcomes.

User access and networking solutions are likely to be the low-hanging fruit that organizations address first in their efforts to remove implicit risk. Many organizations, for example, are adopting least privilege solutions that enable highly granular microsegmented access to secure resources in data centers as well as in the cloud. Combined with other Zero Trust tools such multifactor authentication and remote browser isolation, implementing a robust Zero Trust solution that covers access to the network and the web is a pragmatic move that can put an organization ahead of the pack.

About Mendy Newman

Mendy is the Group CTO of Ericom's International Business operations. Based in Israel, Mendy works with Ericom's customers in the region to ensure they are successful in deploying and using its Zero Trust security solutions, including the ZTEdge cloud security platform.Recent Posts

Air Gapping Your Way to Cyber Safety

Physically air gapping enterprise networks from the web is a great way to protect operations, keep data safe … and squelch productivity. Virtual air gapping is a better approach.

Motion Picture Association Updates Cybersecurity Best Practices

The MPA recently revised its content security best practices to address, among other challenges, the issue of data protection in the cloud computing age.

FTC Issues Cybersecurity Warning for QR Codes

QR codes on ads are a simple way to grab potential customers before they move on. No wonder cybercriminals are using QR codes, too.